Why “completed” retail ops checklists still lead to execution gaps

Most Heads of Retail do not struggle with defining what needs to be done. They struggle with knowing whether it is actually happening.

And in most cases, this entire system runs on one thing: checklists.

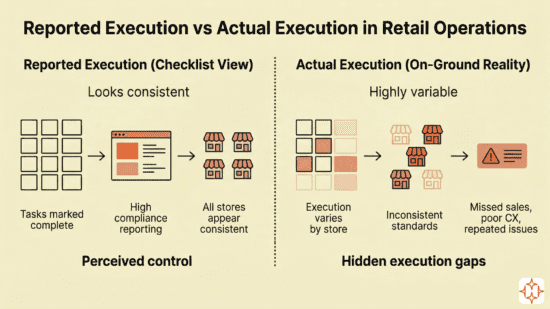

On paper, checklist execution looks clean. Stores report high completion rates. Audits do not consistently flag issues. Dashboards show stability.

And yet, the business tells a different story.

- Some stores underperform without a clear reason

- Customer experience varies across locations

- The same operational issues keep coming back even after they have been marked as resolved

At that point, it stops being an execution problem.

It becomes a checklist visibility problem.

More specifically, it is a structural issue in how checklists capture execution, enable verification, and carry information across levels.

One checklist never works for everyone

Most retail systems are built on standardization.

One checklist. One format. One way of reporting.

It is rolled out across store staff, managers, cluster heads, and leadership.

This feels efficient. But it ignores a fundamental reality.

Each level in retail is solving a different problem.

- Store staff focus on completing tasks in real time

- Managers focus on ensuring tasks were done correctly

- Cluster managers focus on patterns across stores

- Leadership focuses on business outcomes

When all of them rely on the same checklist, the meaning of “done” starts changing at every level.

What “done” actually looks like on the ground

Consider a normal store during peak hours.

A staff member is managing customers, handling billing support, and trying to keep up with operational tasks. Somewhere in between, they complete a checklist item like checking trial room cleanliness.

They mark it done.

In that moment, it feels reasonable. They may have checked quickly, assumed things were fine, or moved on because something more urgent came up.

But from that point onward, the system records a clean signal. The task is complete.

What is missing is the quality of execution.

And once that signal moves upward, every layer treats it as reliable.

Managers end up sampling execution instead of verifying it

By the time the checklist reaches the store manager, everything appears in order.

Now the expectation shifts to verification.

But verification depends on what the system makes visible.

If all tasks appear complete, there is no clear way to identify what needs attention. Managers recheck a few critical areas, ignore the rest, and move forward.

Over time, this creates a pattern.

Managers are not verifying execution. They are sampling it.

This typically shows up as:

- Manual rechecking of selected tasks

- Focus only on high-risk areas

- Issues being caught inconsistently

If verification depends on effort, it will always remain partial.

At the cluster level, variation gets hidden

Cluster managers operate on summaries, not individual tasks.

And summaries tend to smooth out differences.

So most stores appear broadly compliant.

But inside that “compliance,” execution varies. Some stores rush tasks. Some interpret SOPs differently. Some consistently under-execute but still report completion.

Because the system does not surface patterns, these differences remain invisible.

This is where problems start scaling.

- Stores look equally compliant

- Issues repeat across locations

- No clear pattern is identified

What appears as consistency is often lack of visibility.

By the time it reaches leadership, the view is clean but incomplete

At the leadership level, everything is consolidated into dashboards.

Completion rates are high. Audit scores are stable. Escalations are limited.

It looks like control.

But this view is built on reported execution, not verified execution.

A simple way to test this:

- High completion with inconsistent outcomes

- Strong compliance scores with recurring issues

- Stable reports with unpredictable store performance

If these exist together, the system is not capturing reality.

A single missed task can move through every layer without friction

Take a basic task like a fire safety check.

At the store level, it gets marked complete, possibly without a thorough inspection. The manager does not recheck it because nothing flags it. The cluster manager sees no pattern. Leadership sees high compliance.

At no point does the system slow this down.

This is how risk builds in retail.

- Not through large failures

- But through small, repeated assumptions

A checklist is being stretched across roles that need different things

A checklist in retail is expected to do too much at once.

It is used to drive execution, enable verification, reveal patterns, and support decisions.

Each of these requires a different structure.

| Level | What they need | What the checklist should do |

| Store staff | Clarity and speed | Help complete tasks quickly and correctly |

| Store manager | Confidence in execution | Show what needs verification and provide proof |

| Cluster manager | Visibility across stores | Highlight patterns and repeated gaps |

| Leadership | Business impact | Connect execution to retail store KPIs |

When one checklist is used across all levels, it becomes insufficient for each of them.

Moving to digital tools does not solve the underlying problem

Most teams respond by adopting digital tools.

They move from paper to Excel, from Excel to WhatsApp, or to a digital checklist app for retail.

This improves tracking and speed.

But the structure remains unchanged.

So the same issues continue:

- Completion is recorded without validation

- Data is available but not actionable

- Reports exist but are not fully trusted

Digital systems often make incorrect data move faster.

They do not make it more reliable.

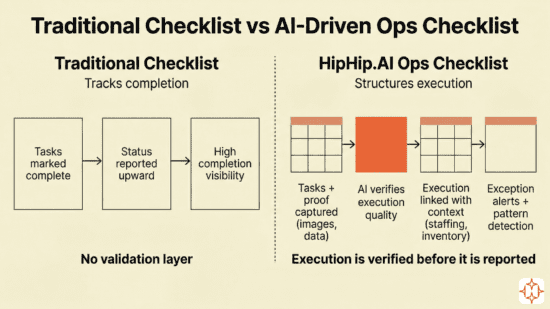

What changes with HipHip.AI’s Ops Checklist (not just a form)

HipHip.AI’s Ops Checklist is built to structure how execution is captured and understood across retail operations. It connects every task to proof, timing, and context, ensuring that what gets marked as “done” holds the same meaning across levels.

At its core, it brings together a few key capabilities:

- Tasks are supported with proof of execution (images, inputs, validations)

- Execution is tracked with time context, not just completion status

- Inputs are validated for quality, not just recorded

- Signals are connected across systems, staffing, inventory, training, and issues

This is what allows an AI-driven checklist to function differently. It evaluates execution, surfaces what needs attention, and carries reliable signals upward without losing context.

Use caseA new campaign launch day. Every store is expected to update displays, place new SKUs, and correct pricing before peak hours. Store teams submit images of displays and product placement as part of the checklist. These images are evaluated for execution quality. Missing price tags, incorrect SKU placement, or incomplete displays are flagged immediately. If a store completes setup after peak hours, that delay is also captured. So the checklist reflects:

For a store manager, this highlights where rollout is delayed or incorrectly executed, without needing to review every store. At the cluster level, repeated gaps become visible, stores that consistently miss timelines, locations where execution drops during peak hours, or regions where standards are not followed. At the leadership level, rollout visibility is tied to execution quality and timing, and how it impacts sales windows and customer experience. The same structure extends into everyday operations. Staffing plans align with actual coverage during peak hours, stock audits connect with real inventory movement, training links to task readiness, and store-level issues move into tracked resolution flows with accountability. |

Execution flows upward without distortion, and every level works off the same version of reality.

Retail execution breaks in small gaps, not big failures

Retail execution rarely breaks in obvious ways. It breaks in small gaps between what is done, what is reported, and what is understood.

If the same checklist is used across every level, those gaps will persist.

Because each level is solving a different problem.

Until the system reflects that, execution will continue to look fine on paper and inconsistent on the ground.

Frequently asked questions

- Why does using a single checklist across all roles lead to execution gaps?

A single checklist assumes that all roles need the same information.

In reality:

- Store teams need clarity to execute

- Managers need proof to verify

- Cluster managers need patterns across stores

- Leadership needs insights tied to performance

When one structure is used across all levels, each layer interprets “completion” differently. This creates multiple versions of reality inside the same system.

- Where does execution actually break in a multi-store retail hierarchy?

Execution rarely breaks at one point. It degrades across layers:

- Tasks are marked complete without full execution at the store level

- Managers selectively verify due to lack of signals

- Cluster managers fail to see patterns across stores

- Leadership receives a simplified but incomplete view

The failure is not in task execution alone, but in how it is carried through the hierarchy.

- Why do managers struggle to ensure task quality even with checklists in place?

Most checklist systems are designed for completion, not verification.

Managers typically see:

- Status of tasks

- Not proof of execution

This forces them to rely on:

- Manual checks

- Experience-based judgment

Without structured verification, managers cannot scale quality control across all tasks.

- Why do cluster managers fail to identify recurring issues across stores?

Because most systems present store-level data, not cross-store patterns.

As a result:

- Each issue appears isolated

- Repetition is not flagged

- Systemic gaps remain invisible

Unless the checklist system aggregates and structures data for pattern detection, recurring issues remain undiagnosed.

- Why do leadership dashboards often show stability despite operational gaps?

Dashboards reflect what is reported, not what is validated.

As data moves upward:

- Execution is simplified

- Variations are smoothed out

- Missing context is lost

This creates a clean but incomplete view, where high compliance can coexist with inconsistent performance.

- What does a role-based checklist system change in practice?

It aligns each level with what they actually need to act on:

- Store teams focus on accurate execution

- Managers focus on efficient verification

- Cluster managers focus on identifying patterns

- Leadership focuses on decision-making linked to outcomes

This removes interpretation gaps and creates a single, consistent version of reality across the organization.

- How is AI evolving traditional checklist capabilities?

Traditional checklists are designed to record whether a task was completed. AI changes this by evaluating how the task was executed, when it was done, and whether it meets expected standards.

Instead of relying only on manual inputs, AI enables:

- Proof-based validation using images or structured inputs to verify execution quality

- Context-aware tracking that captures timing, sequence, and consistency of tasks

- Pattern detection to identify repeated gaps across stores without manual analysis

- Exception surfacing that highlights only the tasks that require attention

This shifts the role of a checklist from a static tracking tool to a system that interprets execution and makes it actionable across levels.